Listen to the article

from the the-law-breaking-admin dept

We’ve been pointing out the fundamental contradiction at the heart of mandatory age verification laws for years now. To verify someone’s age online, you have to collect personal data from them. If that someone turns out to be a child, congratulations: you’ve just collected personal data from a child without parental consent. Which is a direct violation of the Children’s Online Privacy Protection Act (COPPA)—the very law that’s supposed to be protecting kids.

So what happens when the agency charged with enforcing COPPA finally notices this obvious problem? If you guessed “they admit the conflict and then just promise not to enforce the law,” you’d be exactly right.

The FTC put out a policy statement last week that is remarkable in what it tacitly concedes:

The Federal Trade Commission issued a policy statement today announcing that the Commission will not bring an enforcement action under the Children’s Online Privacy Protection Rule (COPPA Rule) against certain website and online service operators that collect, use, and disclose personal information for the sole purpose of determining a user’s age via age verification technologies.

The FTC appears to be explicitly acknowledging that age verification technologies involve collecting personal information from users—including children—in a way that would otherwise trigger COPPA liability. If the technology didn’t create a COPPA problem, there would be no need for a policy statement promising non-enforcement. You don’t issue a formal announcement saying “we won’t sue you for this” unless “this” is something you could, in fact, sue people for.

The statement itself tries to dress this up by noting that age verification tech “may require the collection of personal information from children, prompting questions about whether such activities could violate the COPPA Rule.” But “prompting questions” is doing an awful lot of work in that sentence. The answer to those questions is pretty obviously “yes, collecting personal information from children without parental consent violates the rule that says you can’t collect personal information from children without parental consent.” The FTC just doesn’t want to say that part out loud, because then the follow-up question becomes: “so why are you encouraging companies to do it?”

Instead, they’ve decided to create an enforcement carve-out. Do the thing that violates the law, but pinky-promise you’ll only use the data to check the kid’s age, delete it afterward, and keep it secure. Then we won’t come after you. This is the FTC solving a legal contradiction not by asking Congress to fix the underlying law or admitting the technology is fundamentally flawed, but by deciding to selectively not enforce the law it’s supposed to be enforcing.

The honest approach would have been to tell Congress that age verification, as currently conceived, cannot be squared with existing privacy law—and that if lawmakers want it anyway, they need to resolve that conflict themselves rather than asking the FTC to pretend it doesn’t exist.

No such luck.

And boy, do they seem proud of themselves. Here’s Christopher Mufarrige, Director of the FTC’s Bureau of Consumer Protection:

“Age verification technologies are some of the most child-protective technologies to emerge in decades…. Our statement incentivizes operators to use these innovative tools, empowering parents to protect their children online.”

“The most child-protective technologies to emerge in decades.”

Excuse me, what?

This is the kind of statement that sounds authoritative right up until you spend thirty seconds thinking about it. Anyone with any knowledge of security and privacy knows that age verification is anything but “child protective.” It involves a huge invasion of privacy, for extremely faulty technology, that has all sorts of downstream effects that put kids at risk.

Oh, and the FTC seems proud that the vote for this was unanimous—though it’s worth noting that Donald Trump fired the two Democratic members of the FTC and has made no apparent efforts to replace them, despite Congress designating that the FTC is supposed to have five full members, with two from the opposing party. A unanimous vote among the remaining two Republicans is a strange thing to brag about.

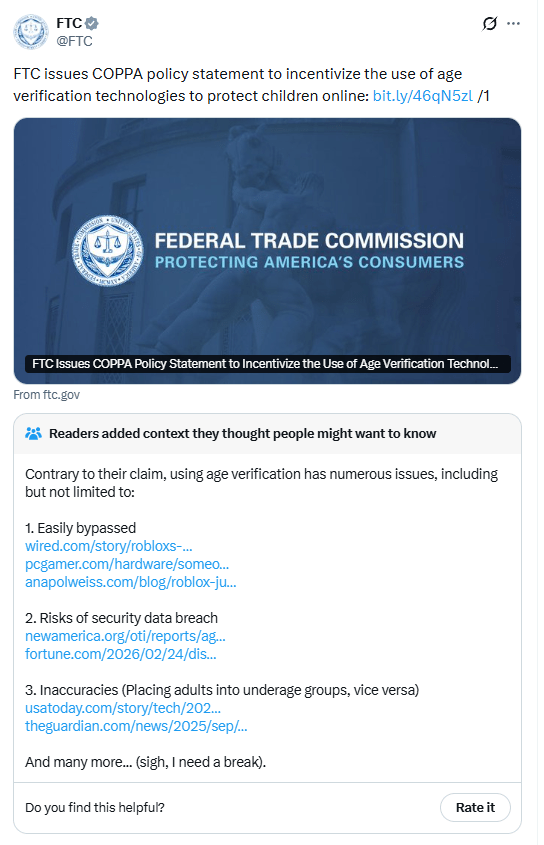

The FTC even posted about this on X, and the response was… well, let me just show you:

If you can’t see that, the main part to pay attention to is not the tweet from the FTC itself, but the Community Note that (under the way Community Notes works, notes need widespread consensus among users to be appended to the public tweet):

Readers added context they thought people might want to know

Contrary to their claim, using age verification has numerous issues, including but not limited to:

1. Easily bypassed

2. Risks of security data breach

3. Inaccuracies (Placing adults into underage groups, vice versa)

And many more… (sigh, I need a break).

Yeah, we all need a break.

That Community Note does a better job explaining the state of age verification technology than the FTC’s entire Bureau of Consumer Protection. It methodically lists out the problems: kids easily bypass these systems, the collected data creates massive security breach risks, and the technology produces wildly inaccurate results that lock adults out while letting kids through (and vice versa). When the consensus-driven crowdsourced fact-check on your own announcement is more informative than the announcement itself, maybe it’s time to reconsider the announcement.

But let’s say, for the sake of argument, that the technology worked perfectly. Would mandatory age verification still be a good idea?

That still wouldn’t solve the issues with this technology and the harm it does to kids. Even UNICEF (UNICEF!) has been warning that age restriction approaches can actively harm the children they’re supposed to protect. After Australia’s social media ban for under-16s went into effect, UNICEF put out a statement that could not have been more clear about the risks:

“While UNICEF welcomes the growing commitment to children’s online safety, social media bans come with their own risks, and they may even backfire,” the agency said in a statement.

For many children, particularly those who are isolated or marginalised, social media is a lifeline for learning, connection, play and self-expression, UNICEF explained.

Moreover, many will still access social media – for example, through workarounds, shared devices, or use of less regulated platforms – which will only make it harder to protect them.

So the actual child welfare experts are saying that age verification can backfire, push kids into less safe spaces, and should never be treated as a substitute for real safety measures. Meanwhile, the FTC is calling the same technology “the most child-protective” thing to come along in a generation and is waiving its own enforcement authority to encourage more of it.

What we have here is a federal agency that has identified a direct conflict between the law it enforces and the policy outcome it wants. Rather than grappling with what that conflict means—maybe age verification as currently conceived just doesn’t work within the existing legal framework, and for good reason—the FTC has chosen to simply look the other way. The message to companies is clear: go ahead and collect data from kids to figure out if they’re kids. We know that violates COPPA. We don’t care. We like age verification more than we like enforcing our own rules.

That’s a hell of a policy position for the agency that’s supposed to be the last line of defense for children’s privacy online.

Filed Under: age verification, coppa, ftc, think of the children

Read the full article here

Fact Checker

Verify the accuracy of this article using AI-powered analysis and real-time sources.