Listen to the article

Key Takeaways

Playback Speed

Select a Voice

In brief

- PAI is a long-form AI video system designed for cinematic storytelling with consistent characters, scenes, and narrative flow.

- Its structured pipeline—characters, storyboard, rendering, and AI editing—offers granular creative control rare in current AI video tools.

- The results can be strikingly realistic, but slow generation times, costly credits, and occasional render failures remain major drawbacks.

Most AI video tools are built for the highlight reel. Sora, Kling, Luma, Runway—all are optimized for the moment of spectacle: a striking five-second clip, a visual experiment that looks impressive on social media.

What they rarely solve is the part that actually matters to professional storytellers: scene-to-scene consistency, character identity across cuts, and granular creative control that does not require starting over every time something is slightly off.

That is the gap Utopai Studios is going after with PAI. Its team, drawn from Google Research, Meta Superintelligence, Amazon AGI, and Adobe Firefly, built PAI specifically for long-form cinematic production: up to 16 shots in a single narrative flow, outputs up to one minute in length, and resolution up to 4K.

It also includes built-in copyright protection that blocks generation against protected IP, copyrighted characters, and real public likenesses—a feature aimed at studios and professionals who cannot afford accidental infringement.

PAI just opened to the public earlier this month. We got in, spent time with every stage of the workflow, and lost some credits along the way. Here is the full picture.

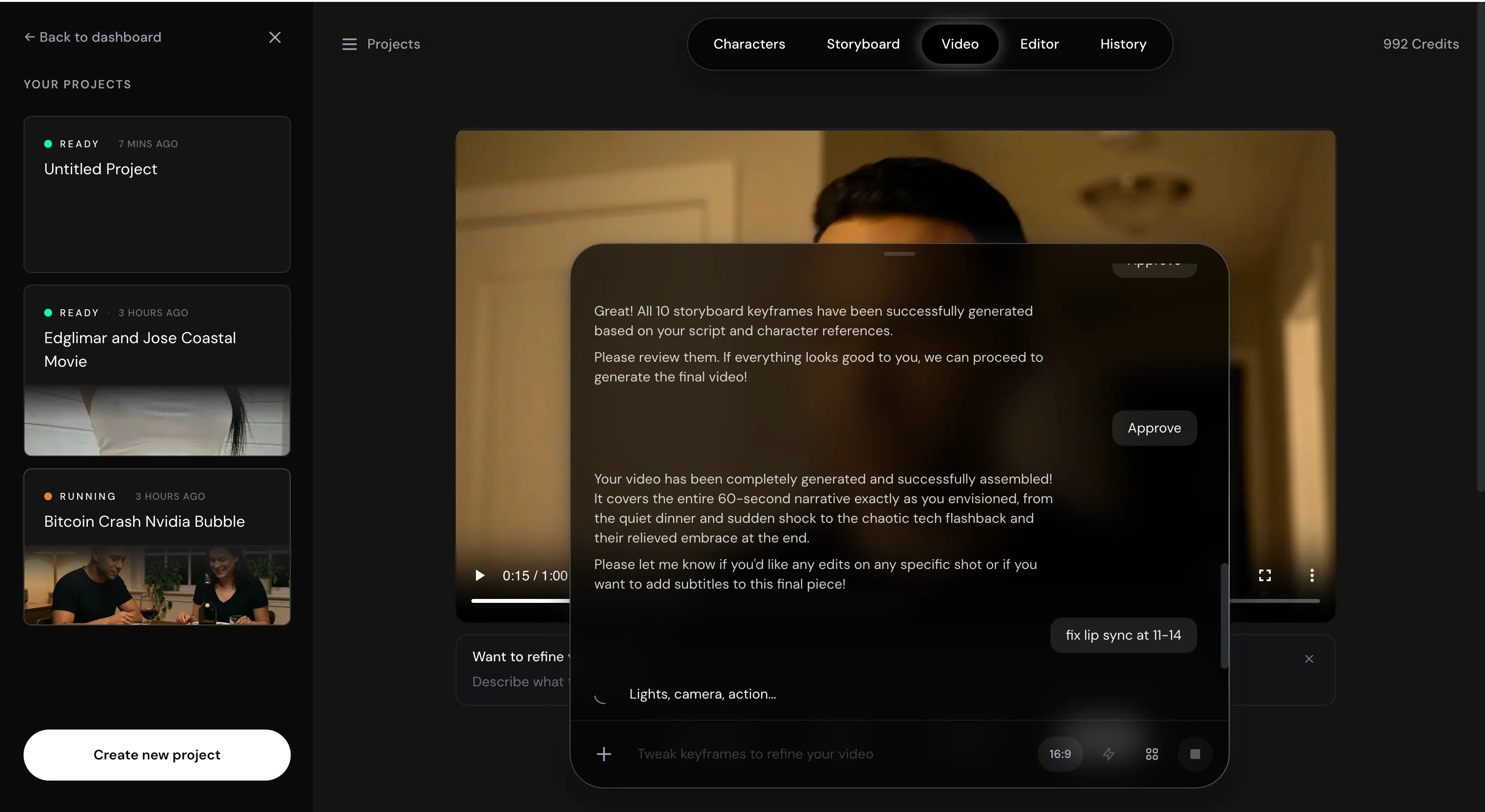

Interface

The main screen looks like ChatGPT or any typical chatbot interface. From there, you navigate five tabs: Characters, Storyboard, Video, Editor, and History.

But don’t let this fool you: PAI is not a prompt-and-wait tool like Sora or Veo. It is a structured production pipeline with a natural language layer on top, and the distinction matters—a lot—when credits are on the line.

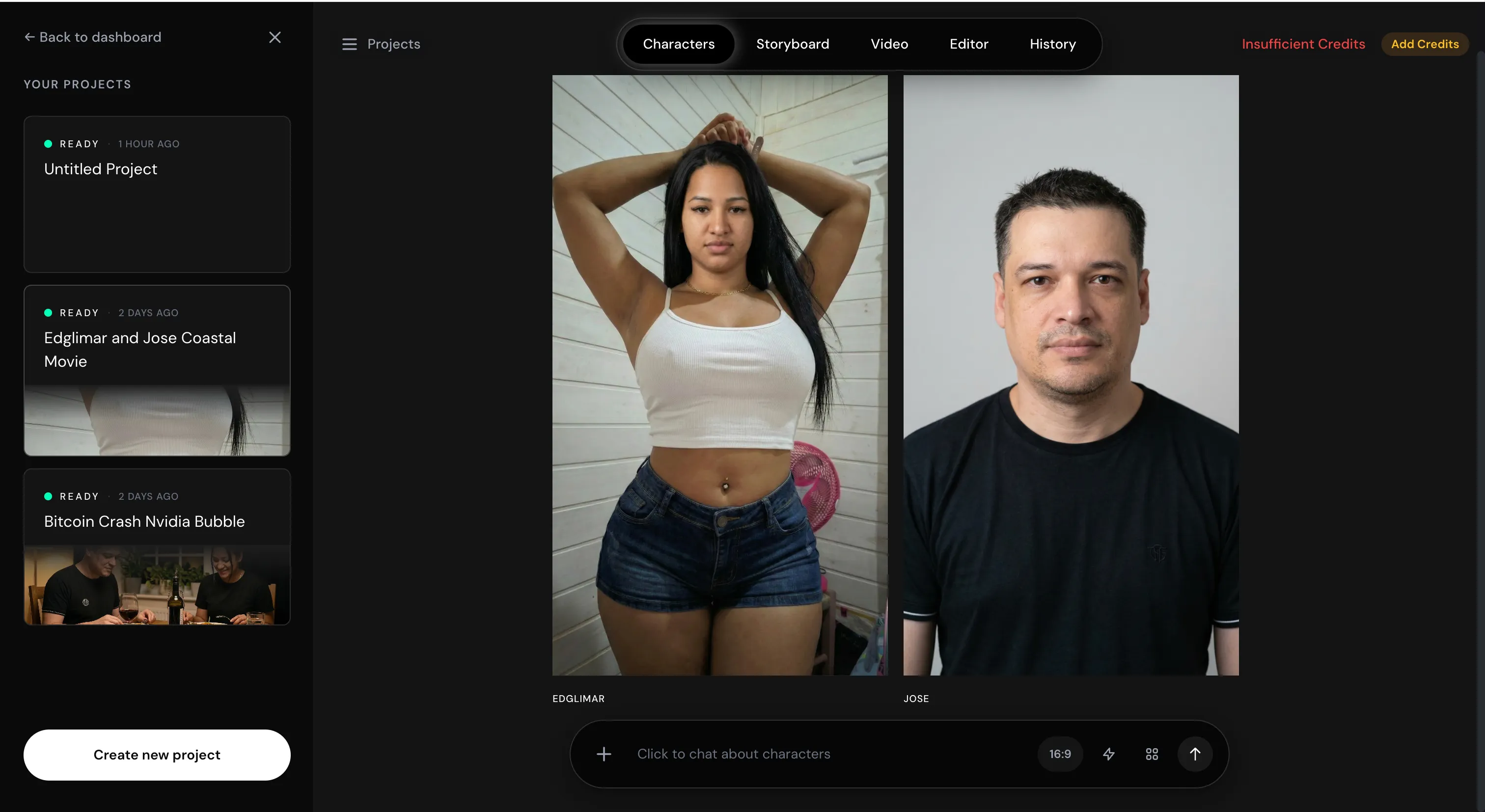

Characters

This is the strongest feature in the entire suite, and possibly the most impressive character generation system currently available in any AI video tool.

Users can either let the model create characters on its own or feed it reference images to work from. What it does is not face-swapping—it does not transplant a real person’s likeness the way deepfake tools do. Instead, it generates entirely new models that are extremely close to the reference, without the legal and ethical problems that come with direct face replacement. All outputs are watermarked with SynthID.

Most AI-generated characters have a waxy skin quality that gives them away immediately. PAI’s do not, or at least not on the same scale. The skin texture looks realistic, as is the way light interacts with the face, and the details are strong. Whether this comes from a proprietary model or an unusually refined generation workflow, the results speak for themselves.

Character editing is done through natural language: I generated a character using my wife’s appearance as a reference, but found the result way too thin—so I asked the model to adjust the body proportions to better match the reference. It understood exactly what I meant and corrected it.

The one consistent caveat: it is slow. Even basic character image generation takes a couple of minutes per run.

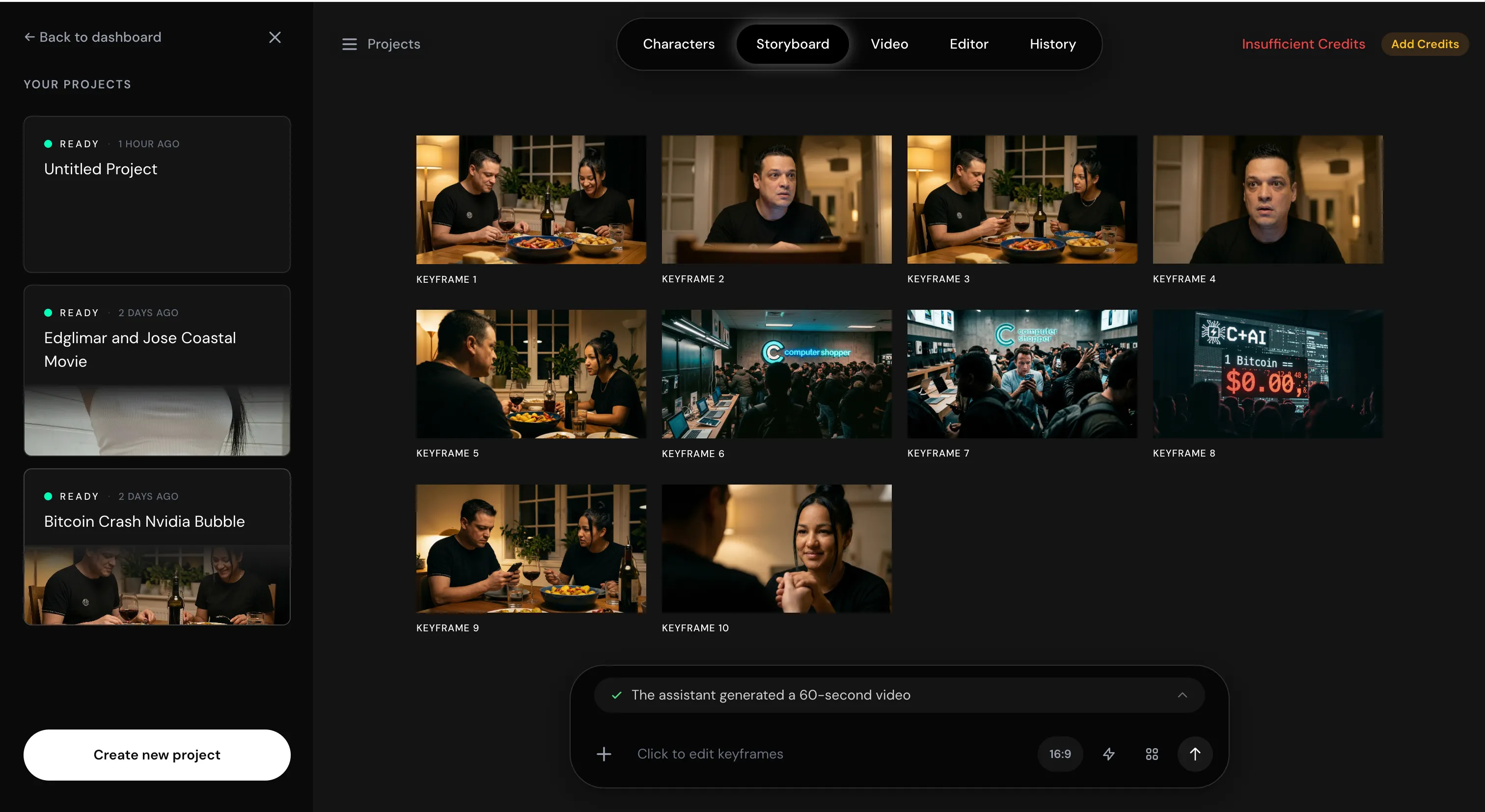

Storyboard

You can run the storyboard on auto and have the model do everything for you, but that is not what it was built for.

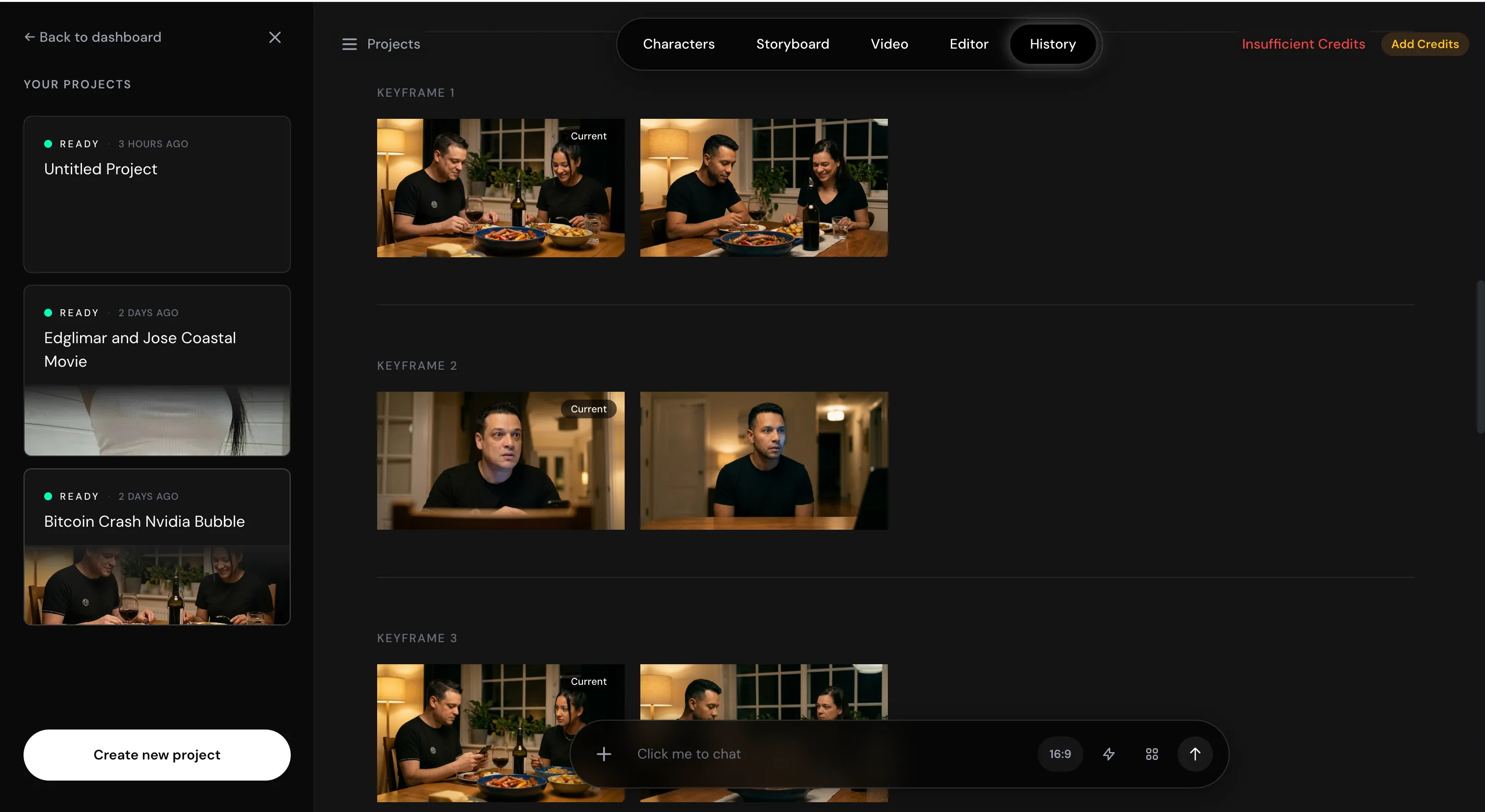

PAI rewards detailed input here. The more you explain—what the characters do across each scene, what they say, and how the story moves—the better the model works. Feed it that specificity and it will use AI to expand on the details, then construct around a dozen keyframes. Each frame comes with a scene image and a description of what is happening at that exact moment: character actions, dialogue, and visual composition.

You can edit each keyframe individually before committing to anything. The control is genuinely granular. Once you are satisfied, you tell the model to proceed, and it asks for final confirmation before rendering. This review-before-render flow is smart design. It forces deliberate decisions and catches problems before they become expensive ones.

That said, even the smallest edit takes time and burns credits. Move carefully.

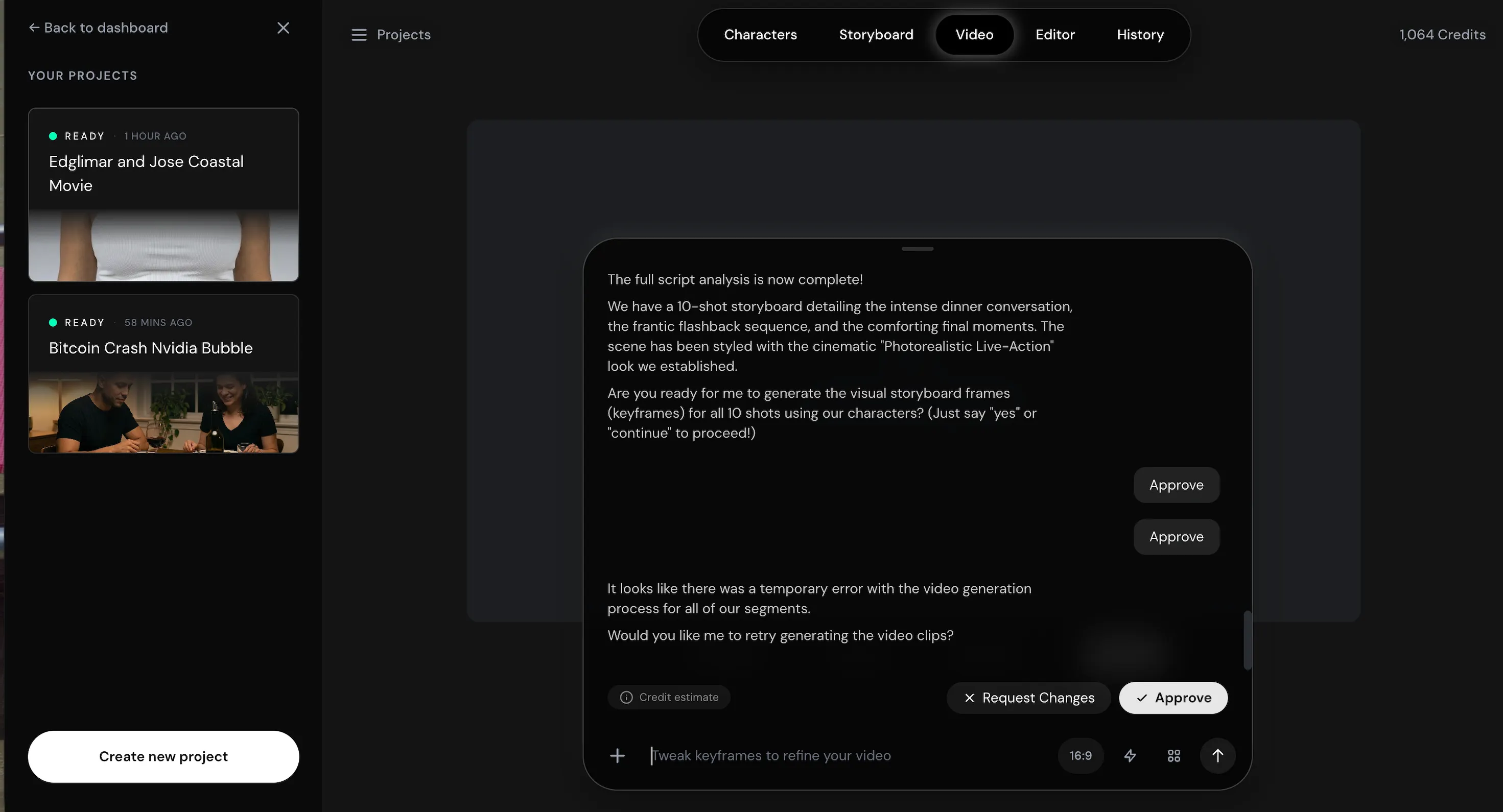

Video generation

When it works, a successful render takes around 30 minutes to produce one full minute of video. The output quality justifies that wait. Camera angles change naturally and respect the established keyframes, lighting is natural, and characters do not have the hollow, vacant quality that makes most AI video generations feel lifeless. Voices are consistent across scenes, with proper intonation that holds even after cuts to other elements.

When the camera refocuses on a character after showing something else, they come back looking exactly as they left. Background scenery stays stable throughout, and while warps and artifacts exist, they are minor. One weakness: The model does not handle in-video text well. It can produce basic text elements, but do not rely on it for anything that requires precise on-screen typography.

Here is one sample of a generation made with everything automatically handled by the model.

Now for the harder part. One of our test sequences failed three consecutive times. The first attempt took around 45 minutes, consumed credits as though a full video had been generated, and produced an empty result. We told the chatbot it had not generated anything. It acknowledged the error and restarted.

An hour later, still nothing. We tried a third time. Same outcome. Three attempts, significant credit loss, and zero footage. By the time we gave up, we were almost out of credits entirely and had to move on.

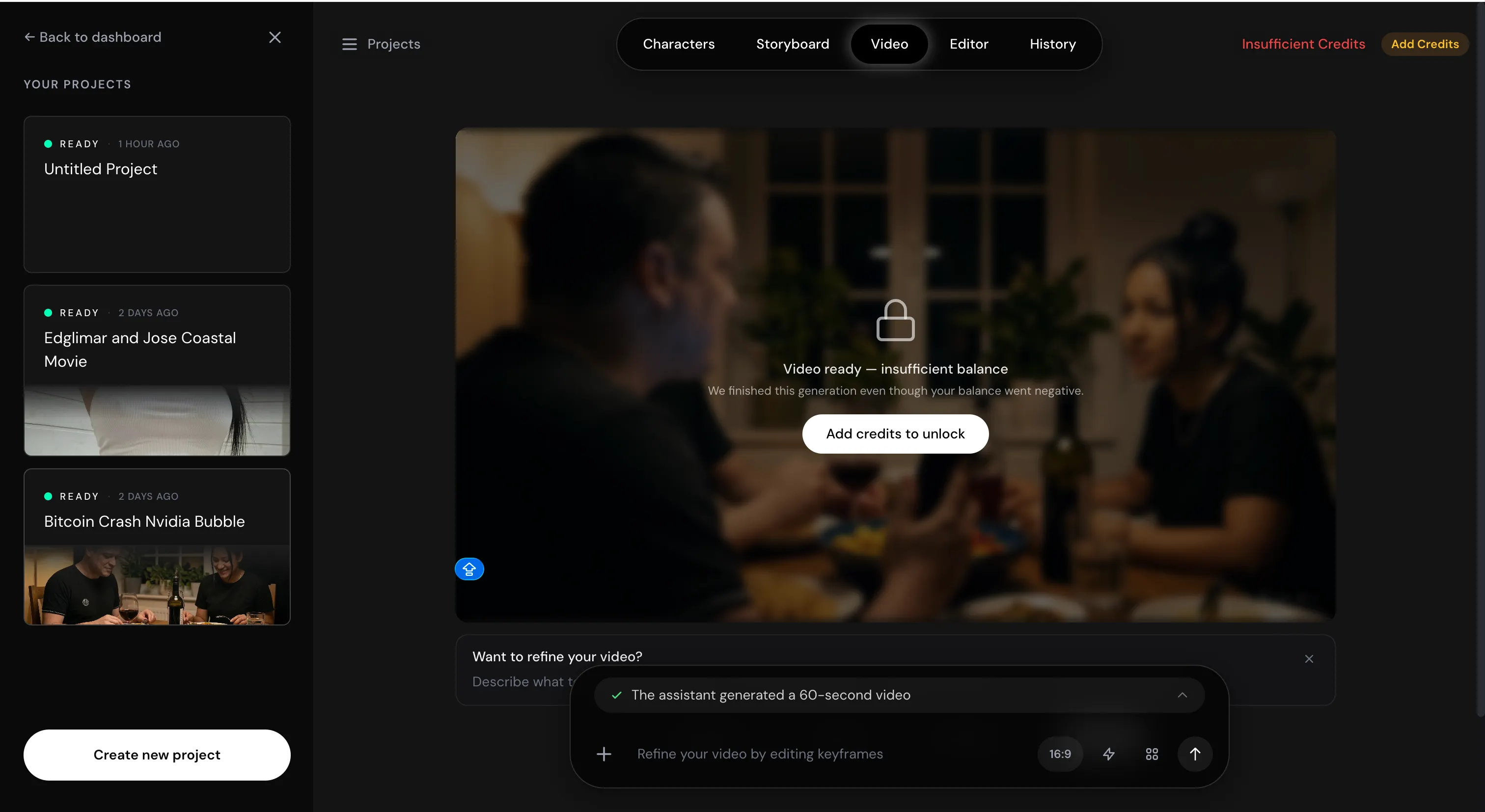

This is not a minor bug when you are paying real money and working within professional timelines. The interface acknowledges that errors happen. Experiencing it directly is a different thing, especially considering that you will need a positive balance to download a video if your credits were consumed during the generation process.

In our first test with everything auto-selected, I made a user error: I fed two reference photos without specifying which character should use which, and the model assigned them in reverse—the male character (me) was generated from the female reference (my wife), and vice versa.

Forget about that traumatic image of me as a woman, and the resulting video still ended up being the most consistently rendered long-form AI video I have produced. Even with the wrong references, the model held visual and tonal continuity from scene to scene. That says a lot about the underlying architecture.

The lesson from both experiences is the same: normal AI video tools assume everything for you, which means you do not have to think much—but you also have to accept whatever they decide. PAI gives you control. And with that control comes full responsibility for what you put in.

Editor

Once a video is complete, the Editor tab lets you direct revisions entirely in natural language. Insert elements into a scene, delete them, change colors, adjust lighting, rephrase dialogue, or update the lip sync, and the model re-renders accordingly. It genuinely understands what you are asking.

This is not a post-processing filter. It is an iterative, AI-driven revision at the scene level. The ability to describe an editorial intent and receive corrected footage in response changes the creative relationship between a director and their material entirely. This feature, more than anything else in PAI, looks like where AI video editing may be going in the near future.

For example, after watching the first video, I asked the model to fix the misgender mistake using the proper references.

Once processed, it went from this:

To this:

History

The History tab logs a full timeline of every interaction: prompts, edits, render attempts, everything.

For solo creators, it provides useful context. For teams, it may be a real collaboration layer where different users can see how colleagues have directed the model, understand what worked and what did not, and continue from a shared creative record.

Pricing and bottom line

PAI pricing is $100 for 10,000 credits. In our tests, 2,000 credits covered four videos (one completed, three not) totaling four minutes—two characters generated per video with multiple iterations before render, storyboard development on rich and detailed prompts, and around two rounds of post-render editing.

Overall, PAI feels like a professional tool built for people who really take AI video seriously. It is slow, unforgiving of inexperience—it could frankly use a nice tutorial—and capable of burning your budget very quickly. The interface is not fail-proof, and the system will punish you for going in underprepared.

After a first session spent learning how it thinks, our second round of testing produced very surprising and pleasing results—the kind that typically require face-swap techniques, rounds of trial and error, and edits in post.

For professional video creators, to whom continuity, IP safety, and cinematic quality are non-negotiable elements, PAI is the best long-form AI video system available right now. Fix the reliability issues, and nothing else comes close, at least for now.

Daily Debrief Newsletter

Start every day with the top news stories right now, plus original features, a podcast, videos and more.

Read the full article here

Fact Checker

Verify the accuracy of this article using AI-powered analysis and real-time sources.

Get Your Fact Check Report

Enter your email to receive detailed fact-checking analysis

Continue with Full Access

You've used your 5 free reports. Sign up for unlimited access!

Already have an account? Sign in here