Listen to the article

from the trusting-the-wrong-people dept

Within hours on Friday, the Pentagon blacklisted one AI company for refusing to drop its safety commitments on surveillance and autonomous weapons, then turned around and praised a competitor for signing a deal that supposedly preserved those exact same commitments.

This confused some people. Why would the Pentagon seek to destroy one company over the same terms it agreed to with its largest competitor just hours later?

There’s an answer though: the words in OpenAI’s contract likely don’t mean what most people think they mean.

This isn’t speculation about future abuse. It’s the documented operating procedure of the NSA for decades—a practice exposed repeatedly by whistleblowers, litigated in courts, and eventually confirmed in declassified documents.

OpenAI published the broad contours of its agreement, positioning it as having more guardrails than any previous deal, including Anthropic’s. The company lists three “red lines”:

No use of OpenAI technology for mass domestic surveillance. No use of OpenAI technology to direct autonomous weapons systems. No use of OpenAI technology for high-stakes automated decisions (e.g. systems such as “social credit”).

Sounds great, right? Who wouldn’t support that? The problem becomes apparent only when you read the actual contract language OpenAI published, and specifically, the legal authorities it cites as defining what constitutes “lawful” behavior by the Pentagon:

For intelligence activities, any handling of private information will comply with the Fourth Amendment, the National Security Act of 1947 and the Foreign Intelligence and Surveillance Act of 1978, Executive Order 12333, and applicable DoD directives requiring a defined foreign intelligence purpose. The AI System shall not be used for unconstrained monitoring of U.S. persons’ private information as consistent with these authorities.

If you’ve spent any time studying how the NSA actually operates, that reference to Executive Order 12333 should make the hairs on the back of your neck stand up. Because EO 12333 is, in practice, one of the largest loopholes for surveilling Americans’ communications that the intelligence community possesses. And by defining its “red lines” as compliance with these authorities, OpenAI has effectively adopted the intelligence community’s dictionary—a dictionary in which common English words have been carefully redefined over decades to permit the very things they appear to prohibit.

We’ve covered this extensively over the years, because understanding how the NSA plays word games is critical to understanding basically anything it claims about surveillance. As a former State Department official, John Napier Tye, explained back in 2014 in the Washington Post:

Executive Order 12333 contains no such protections for U.S. persons if the collection occurs outside U.S. borders. Issued by President Ronald Reagan in 1981 to authorize foreign intelligence investigations, 12333 is not a statute and has never been subject to meaningful oversight from Congress or any court….

Unlike Section 215, the executive order authorizes collection of the content of communications, not just metadata, even for U.S. persons. Such persons cannot be individually targeted under 12333 without a court order. However, if the contents of a U.S. person’s communications are “incidentally” collected (an NSA term of art) in the course of a lawful overseas foreign intelligence investigation, then Section 2.3(c) of the executive order explicitly authorizes their retention. It does not require that the affected U.S. persons be suspected of wrongdoing and places no limits on the volume of communications by U.S. persons that may be collected and retained.

That phrase “incidentally collected” is doing enormous work in that paragraph. In NSA-speak, “incidental” collection doesn’t mean “oops, we accidentally grabbed that.” It means “we were targeting a foreigner, and your data happened to be in the giant pile we vacuumed up, so we get to keep it.” As Tye put it:

“Incidental” collection may sound insignificant, but it is a legal loophole that can be stretched very wide. Remember that the NSA is building a data center in Utah five times the size of the U.S. Capitol building, with its own power plant that will reportedly burn $40 million a year in electricity.

“Incidental collection” might need its own power plant.

This is what makes OpenAI’s assurance that its technology won’t be used for “mass domestic surveillance” feel hollow. Under the legal framework OpenAI has explicitly agreed to operate within, the NSA can target a foreign person, scoop up vast quantities of Americans’ communications in the process, retain all of it, and search through it later—and none of that counts as “surveillance of U.S. persons” by the government’s own definitions.

The folks over at EFF explained this same dynamic back in 2013, walking through how the word “target” itself had been stretched beyond recognition:

In plain English: the NSA believes it not only can (1) intercept the communications of the target, but also (2) intercept communications about a target, even if the target isn’t a party to the communication. The most likely way to assess if a communication is “about” a target is to conduct a content analysis of communications, probably based on specific search terms or selectors.

And that, folks, is what we call a content dragnet.

Importantly, under the NSA’s rules, when the agency intercepts communications about a target, the author or speaker of those communications does not, thereby, become a target: the target remains the original, non-US person. But, because the target remains a non-US person, the most robust protection for Americans’ communications under the FISA Amendments Act (and, indeed, the primary reassurance the government has given about the surveillance) flies out the window.

So when OpenAI’s contract says its technology will be used in compliance with these authorities, and “shall not be used for unconstrained monitoring of U.S. persons’ private information as consistent with these authorities,” what does that actually mean? It means: the government gets to define what counts as “unconstrained monitoring” and what counts as “U.S. persons’ private information,” using definitions that have been purpose-built over decades to permit exactly the kind of bulk collection that a reasonable person would call “mass domestic surveillance.”

Note also the careful qualifier: “unconstrained monitoring.” That word “unconstrained” is doing a lot of heavy lifting. Under the government’s framework, surveillance that operates under any constraint—even the fig leaf of “we were targeting a foreigner and your data just showed up”—is, by definition, constrained. So the contract arguably permits all of the surveillance the NSA already does, because the NSA would say none of it is unconstrained. It all has rules! The rules just happen to permit collecting and keeping basically everything.

This is precisely where the breakdown between Anthropic and the Pentagon becomes illuminating. According to reporting in the New York Times, the final sticking point was something much more concrete:

Mr. Michael, who was on a call with Anthropic executives at the time, said the Pentagon wanted the company to allow for the collection and analysis of unclassified, commercial bulk data on Americans, such as geolocation and web browsing data, people briefed on the negotiations said.

Anthropic told the Pentagon that it was willing to let its technology be used by the National Security Agency for classified material collected under the Foreign Intelligence Surveillance Act. But the company wanted a legally binding promise from the Pentagon not to use its technology on unclassified commercial data.

Apparently, Anthropic was willing to let the NSA use its AI on classified intelligence material collected under FISA. That’s already a significant concession to the national security establishment. What Anthropic wouldn’t do is let the Pentagon use its AI to trawl through the commercially available data that data brokers sell about Americans—your geolocation data, your browsing history, your credit card transactions. Anthropic wanted a legally binding commitment that this wouldn’t happen.

The Pentagon said no. And OpenAI’s published contract language is conspicuously silent on commercial bulk data.

OpenAI’s announcement instead points to compliance with EO 12333 and other existing authorities as its safeguard. But as Tye noted back in 2014, the intelligence community has historically used the distinction between data collected inside and outside the United States to avoid oversight entirely—and in an era where your email from New York to New Jersey bounces through servers in Brazil, Japan, and the UK, that distinction has become essentially meaningless:

A legal regime in which U.S. citizens’ data receives different levels of privacy and oversight, depending on whether it is collected inside or outside U.S. borders, may have made sense when most communications by U.S. persons stayed inside the United States. But today, U.S. communications increasingly travel across U.S. borders — or are stored beyond them. For example, the Google and Yahoo e-mail systems rely on networks of “mirror” servers located throughout the world. An e-mail from New York to New Jersey is likely to wind up on servers in Brazil, Japan and Britain. The same is true for most purely domestic communications.

Executive Order 12333 contains nothing to prevent the NSA from collecting and storing all such communications — content as well as metadata — provided that such collection occurs outside the United States in the course of a lawful foreign intelligence investigation. No warrant or court approval is required, and such collection never need be reported to Congress.

So OpenAI’s “red line” against “mass domestic surveillance” is defined by compliance with legal authorities that, in practice, permit the collection of enormous quantities of Americans’ communications data without warrants, without court approval, and without congressional oversight. That’s the “safeguard.”

Then there’s the autonomous weapons question. OpenAI makes a big deal about the fact that its deployment is “cloud-only” and therefore cannot power autonomous weapons, which would require “edge deployment.” This sounds like a meaningful technical limitation. Anthropic initially considered a similar distinction and, as the Atlantic reported, rejected it:

According to my source, at one point during the negotiation, it was suggested that this impasse over autonomous weapons could be resolved if the Pentagon would simply promise to keep the company’s AI in the cloud, and out of the weapons themselves. The argument was that the models could be kept outside so-called edge systems, be they drones or other kinds of autonomous weapons. They might synthesize intelligence before an operation, but they wouldn’t actually be making kill decisions. The AI’s hands would be clean of any deadly errors that the drones made.

But Anthropic wasn’t satisfied by this solution. The company reasoned that in modern military AI architectures, the distinction between the cloud and the edge is no longer all that defined. It’s less a wall and more of a gradient. Drones on the battlefield can now be orchestrated through mesh networks that include cloud data centers. And although they’re designed to survive on their own, the military’s impulse will always be to maintain as much connectivity between them and the most powerful models in the cloud; the better the connection, the more intelligent the machine.

Indeed, the Pentagon has been working hard to keep the cloud as involved as possible. Part of the goal of its Joint Warfighting Cloud Capability is to push computing resources closer to the fight. The AI may be sitting in an Amazon Web Services server in Virginia rather than a war zone overseas, but if it’s making battlefield decisions, from an ethical standpoint, that’s a distinction without much difference. Anthropic ended up discarding the idea that the cloud provision could resolve the problem. It didn’t take much analysis, according to the source close to the talks.

So the “cloud-only” limitation that forms the centerpiece of OpenAI’s assurance on autonomous weapons is the same limitation that Anthropic considered and quickly dismissed as inadequate. The Pentagon’s own infrastructure programs are specifically designed to blur the line between cloud and edge. An AI model sitting halfway around the world feeding targeting decisions to a drone swarm through a mesh network is, from any functional standpoint, directing an autonomous weapons system. But under OpenAI’s framing, because the model is technically in “the cloud,” the red line remains intact.

This is the pattern. At every turn, OpenAI’s “red lines” are defined not by what a reasonable person would understand those words to mean, but by the government’s carefully constructed legal definitions—definitions that have been engineered, refined, and battle-tested over decades to allow the intelligence community to do the thing while truthfully claiming it’s not doing the thing.

OpenAI either doesn’t understand this history, or (far more likely) understands it perfectly well and has decided that adopting the government’s dictionary is good enough cover. As the Atlantic noted, nearly 100 OpenAI employees signed an open letter indicating they supported the same red lines as Anthropic. They may want to look very carefully at whether the company’s contract actually delivers what it promises.

OpenAI’s defenders will point to the layered safeguards: the cloud-only architecture, the contractual language, the cleared OpenAI engineers in the loop. These aren’t nothing. But they all operate within a framework where the definitions of “surveillance,” “targeting,” and “lawful” have already been handed to the government. Human oversight matters, but not when the humans are operating under rules designed to allow the thing you’re supposedly preventing.

The most revealing line in OpenAI’s entire announcement might be this, from its FAQ:

It was clear in our interaction that the DoW considers mass domestic surveillance illegal and was not planning to use it for this purpose.

And if you’re wondering whether this administration’s assurances about how it will use AI tools are worth the paper they’re printed on, the same Defense Department that just signed this deal launched strikes on Iran within hours—without congressional authorization. The people OpenAI is trusting to self-police their surveillance activities are the same people who apparently consider laws constraining military action more of a suggestion.

The Department of Defense “considers mass domestic surveillance illegal.” Well, sure it does—by its own definitions. The NSA has always considered its activities legal by its own definitions. That’s the whole trick. The NSA would tell you, with a straight face, that it has never conducted mass domestic surveillance, because under its interpretations of the relevant authorities, what it does doesn’t count as “mass domestic surveillance.” It’s “targeted collection” with “incidental” acquisition of U.S. persons’ data that gets retained under minimization procedures approved by the Attorney General, not a court. Totally different thing. Just ask them.

Anthropic looked at the government’s assurances and said: we know how this works, we’ve seen this movie, we want legally binding commitments that go beyond the existing authorities. OpenAI looked at those same assurances and said: sounds good to us.

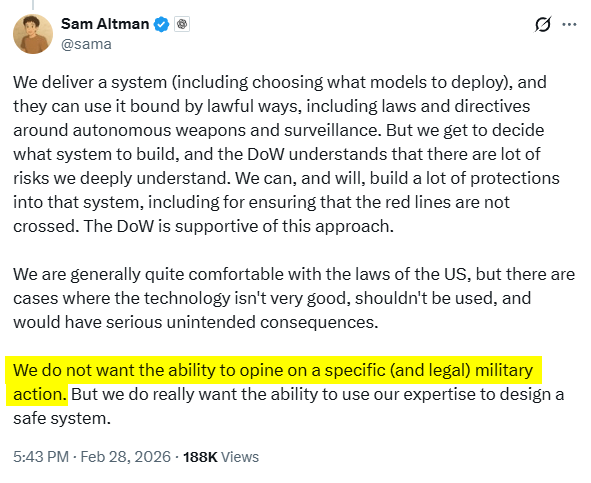

Which brings us to how OpenAI keeps trying to shape this message. Here’s Sam Altman downplaying all of this:

“We do not want the ability to opine on a specific (and legal) military action. But we do really want the ability to use our expertise to design a safe system.” That’s a perfectly reasonable-sounding position. The problem is that “legal” is doing all the work in that sentence, and as we’ve spent the last decade learning, the government’s definition of “legal” when it comes to surveillance and military technology has been stretched so far beyond common understanding that the word has become almost meaningless as a safeguard.

OpenAI didn’t hold the same line as Anthropic. It drew a line on a map using coordinates provided by the very entity it was supposed to be constraining.

Filed Under: defense department, executive order 12333, incidental collection, mass surveillance, nsa, pete hegseth, sam altman, surveillance

Companies: anthropic, openai

Read the full article here

Fact Checker

Verify the accuracy of this article using AI-powered analysis and real-time sources.