Listen to the article

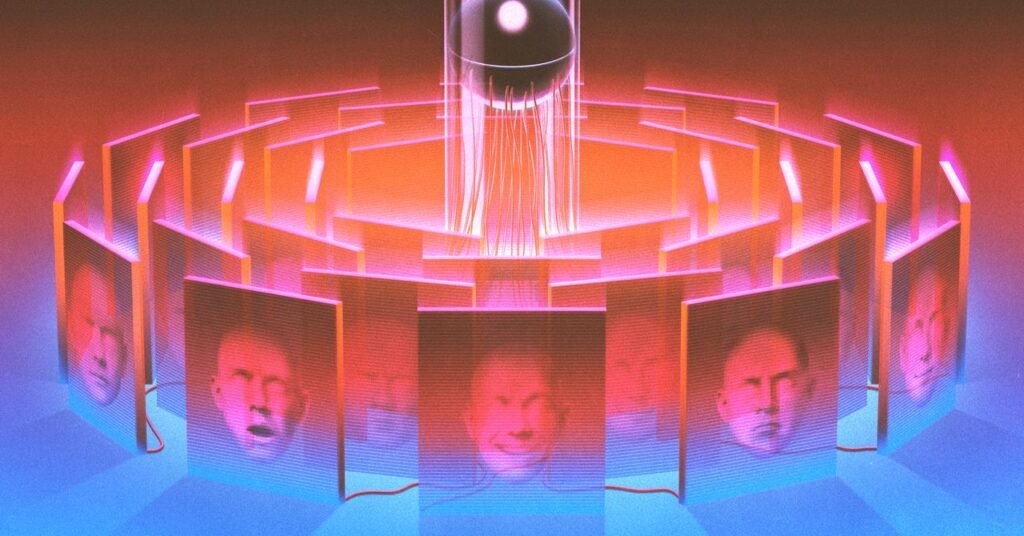

Still, the models are improving much faster than the efforts to understand them. And the Anthropic team admits that as AI agents proliferate, the theoretical criminality of the lab grows ever closer to reality. If we don’t crack the black box, it might crack us.

“Most of my life has been focused on trying to do things I believe are important. When I was 18, I dropped out of university to support a friend accused of terrorism, because I believe it’s most important to support people when others don’t. When he was found innocent, I noticed that deep learning was going to affect society, and dedicated myself to figuring out how humans could understand neural networks. I’ve spent the last decade working on that because I think it could be one of the keys to making AI safe.”

So begins Chris Olah’s “date me doc,” which he posted on Twitter in 2022. He’s no longer single, but the doc remains on his Github site “since it was an important document for me,” he writes.

Olah’s description leaves out a few things, including that despite not earning a university degree he’s an Anthropic cofounder. A less significant omission is that he received a Thiel Fellowship, which bestows $100,000 on talented dropouts. “It gave me a lot of flexibility to focus on whatever I thought was important,” he told me in a 2024 interview. Spurred by reading articles in WIRED, among other things, he tried building 3D printers. “At 19, one doesn’t necessarily have the best taste,” he admitted. Then, in 2013, he attended a seminar series on deep learning and was galvanized. He left the sessions with a question that no one else seemed to be asking: What’s going on in those systems?

Olah had difficulty interesting others in the question. When he joined Google Brain as an intern in 2014, he worked on a strange product called Deep Dream, an early experiment in AI image generation. The neural net produced bizarre, psychedelic patterns, almost as if the software was on drugs. “We didn’t understand the results,” says Olah. “But one thing they did show is that there’s a lot of structure inside neural networks.” At least some elements, he concluded, could be understood.

Olah set out to find such elements. He cofounded a scientific journal called Distill to bring “more transparency” to machine learning. In 2018, he and a few Google colleagues published a paper in Distill called “The Building Blocks of Interpretability.” They’d identified, for example, that specific neurons encoded the concept of floppy ears. From there, Olah and his coauthors could figure out how the system knew the difference between, say, a Labrador retriever and a tiger cat. They acknowledged in the paper that this was only the beginning of deciphering neural nets: “We need to make them human scale, rather than overwhelming dumps of information.”

The paper was Olah’s swan song at Google. “There actually was a sense at Google Brain that you weren’t very serious if you were talking about AI safety,” he says. In 2018 OpenAI offered him the chance to form a permanent team on interpretability. He jumped. Three years later, he joined a group of his OpenAI colleagues to cofound Anthropic.

Read the full article here

Fact Checker

Verify the accuracy of this article using AI-powered analysis and real-time sources.